Citizen science data release

Our goal

In order to enable further research in the field of bioacoustics, we release a new dataset of mosquito audio recordings. With over a thousand contributors, via the Zooniverse citizen science platform, we obtained 195,434 labels (each representing a two-second duration audio clip), of which approximately 10% signify logged mosquito events. We hope this will provide a useful resource for those researching malaria epidemiology, and add to existing audio datasets provided for bioacoustic detection and signal processing.

Data and Code

For more details about the data, and how we can use it to build machine learning models, please visit our full publication, Humbug Zooniverse: A Crowd-Sourced Acoustic Mosquito Dataset, at ICASSP 2020 or alternatively on arXiv. The corresponding code and instructions to download the data are found in our GitHub repository.

Data collection and tagging

To aid the potency of existing algorithms and encourage the development of more data-driven approaches to mosquito detection, we release this crowdsourced dataset. Our dataset contains a mixture of mosquito species recorded in both laboratory and field conditions.

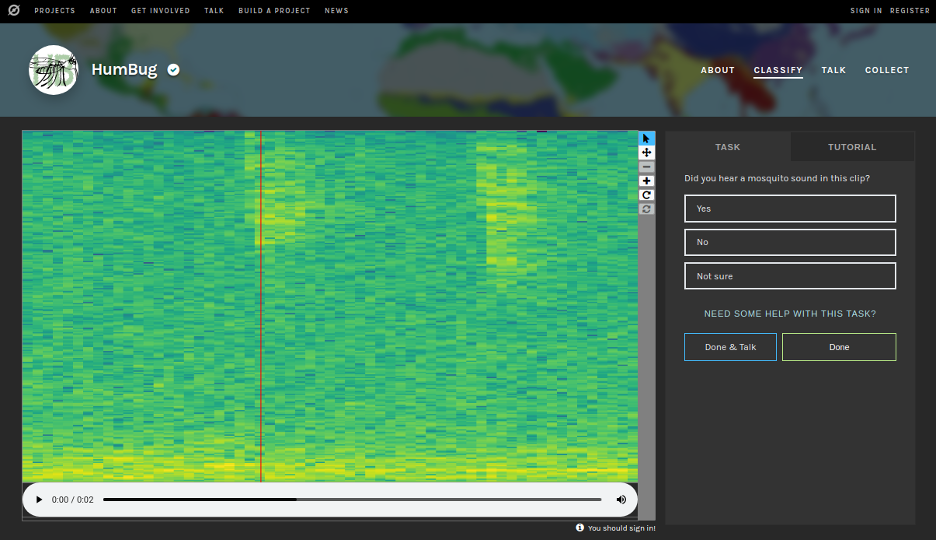

Volunteers listen to two-second sound clips and can also see the corresponding spectrograms (more information here). Volunteers then select either ‘Yes’, ‘No’, or ‘Not Sure’ on the interface, depending on their level of confidence that the clip contains the sound of a mosquito (see Fig. 1). The total number of participants for this release of the dataset is 1,316. The audio clips each overlap by 1 second in order to ensure each section of audio is covered by at least two labels.

Test caption

Figure 1: User interface for the classification page of the Zooniverse HumBug project, found at https://www.zooniverse.org/projects/yli/humbug/. A short-time Fourier transform spectrogram representation is offered above the audio file. Users classify each segment of two second duration into the categories ‘Yes’, ‘No’, or ‘Not sure’.

The recordings presented are obtained from 4 sources, which we group as follows:

- Group A consists of laboratory-based mosquito colonies held in the UK (Oxford), providing acoustic data for vector species Culex quinquefasciatus, a vector of the West Nile virus. These culture cages contained both male and female insects.

- Group B was acquired from laboratory-based mosquito colonies in the United States Army Medical Research Unit, Kenya (USAMRU-K), providing acoustic data for Anopheles gambiae, the primary vector of malaria in Africa.

- Group C was made with the recording of multiple species at the insectary at the Center for Disease Control and Prevention (CDC), Atlanta, USA, including Aedes aegypti and Aedes albopictus, vectors of yellow and dengue fever respectively.

- Group D is formed from recordings of wild captured mosquitoes (including Anopheles barbirostris and Anopheles maculatus, Asian vectors of malaria), sampled from the Pu Teuy Village, Sai Yok District, Kanchanaburi Province, Thailand. This site is a field site of Kaetsart University, Bangkok.

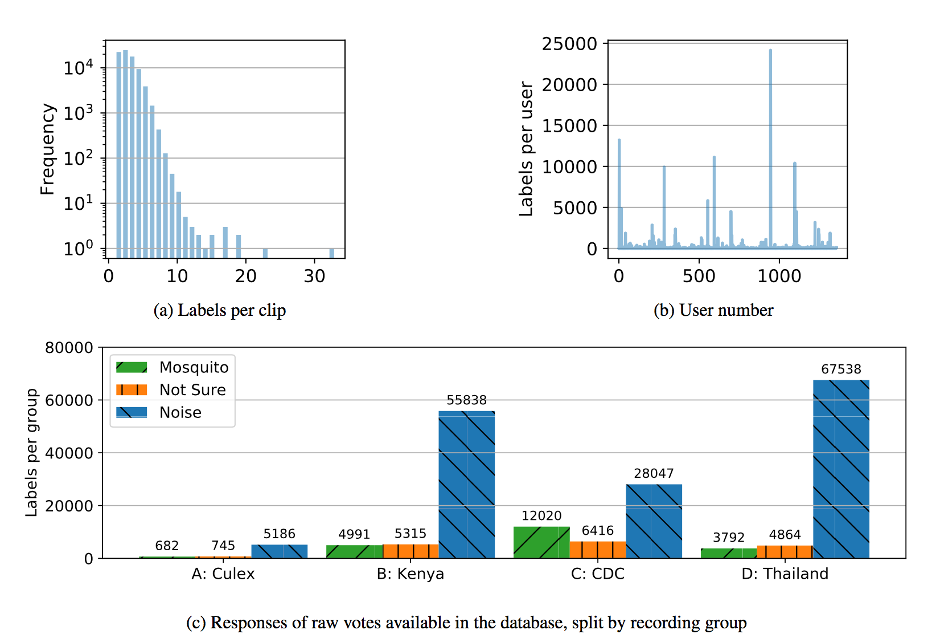

Figure 2: Statistics of the crowdsourced Zooniverse dataset. The total number of classifications made is 195,434, made on 80,101 overlapping 2-second clips (each with a unique audio_id), thus creating a 22 hour dataset of unique audio. 57,710 (72%) of the audio samples contain more than one label, of which 10,487 (18%) contain disagreement. In total, approximately 1 in 10 recordings contains an audible mosquito, with the distribution given in (c).

Machine learning models

For insight into how machine learning models can be built using data such as this, please visit our 'Models' page. If interested, a full Python 3 reproduction of the metadata code is found in Jupyter notebooks on https://github.com/HumBug-Mosquito/ZooniverseData. We supply the tools to transform the data into intermediate feature representations directly in the following notebook.